Since 2021, I have been a PhD student at

HKUST co-advised by

Lionel Ni (President of HKUST-GZ)

and

Harry Shum (Former Executive Vice President of Microsoft).

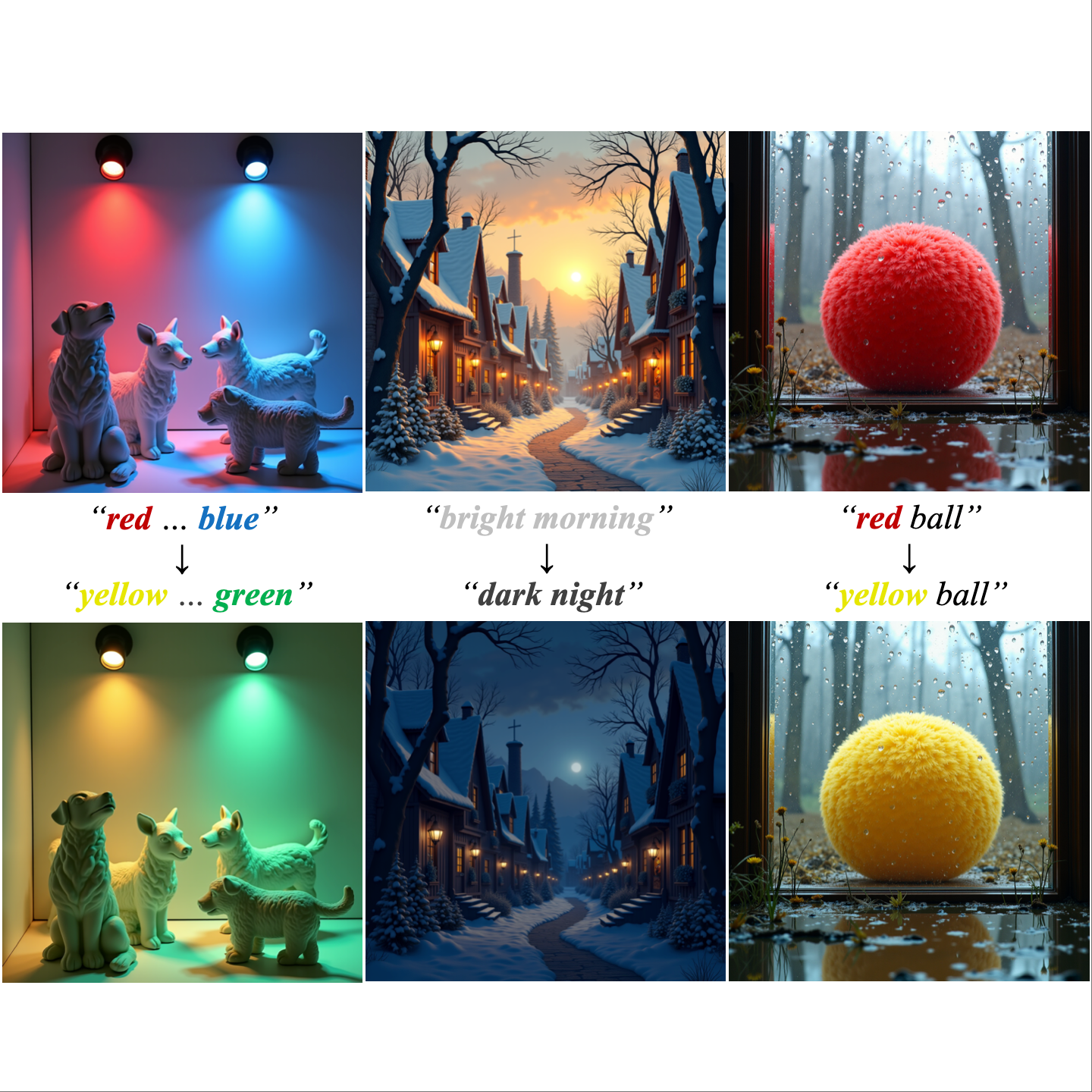

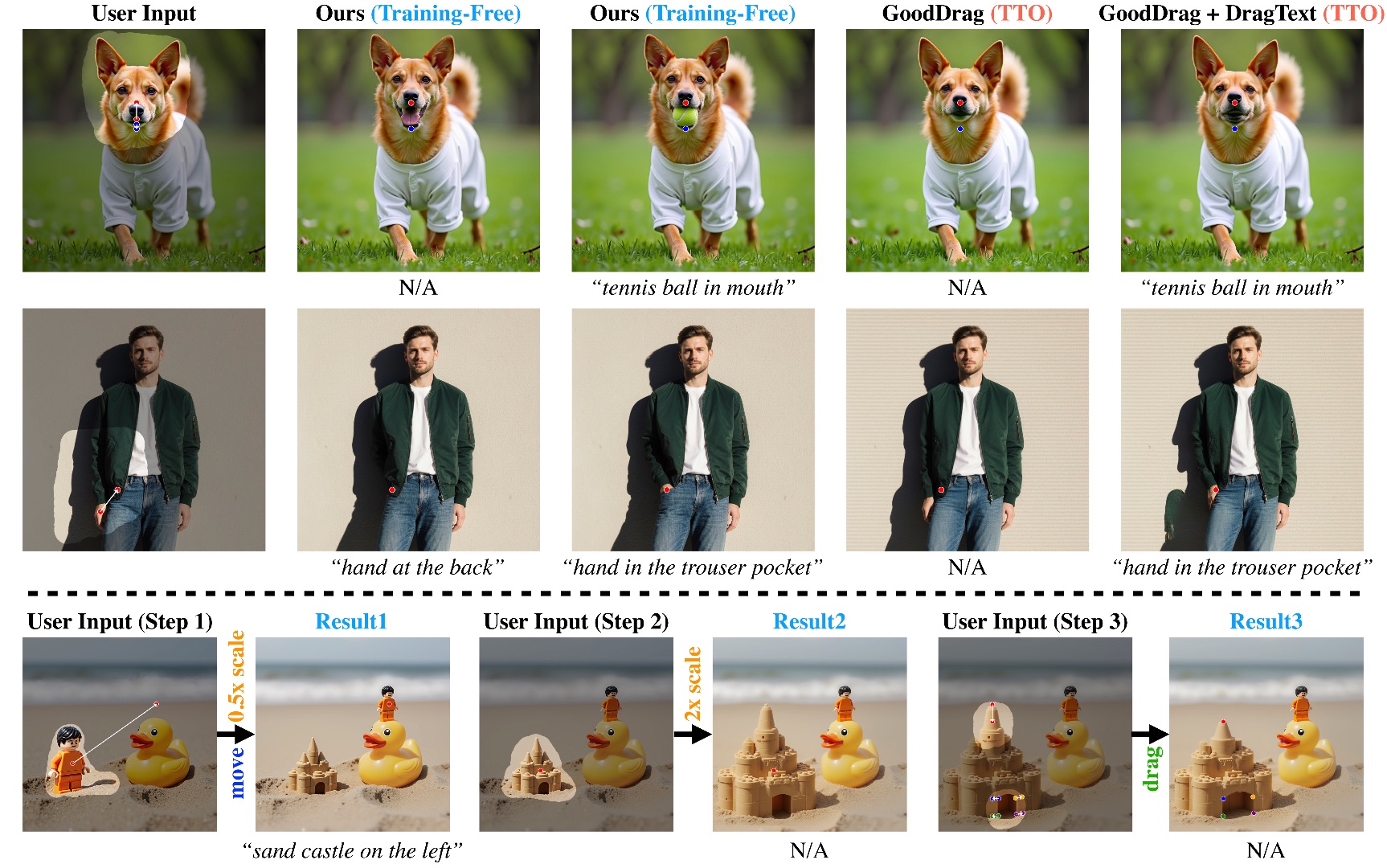

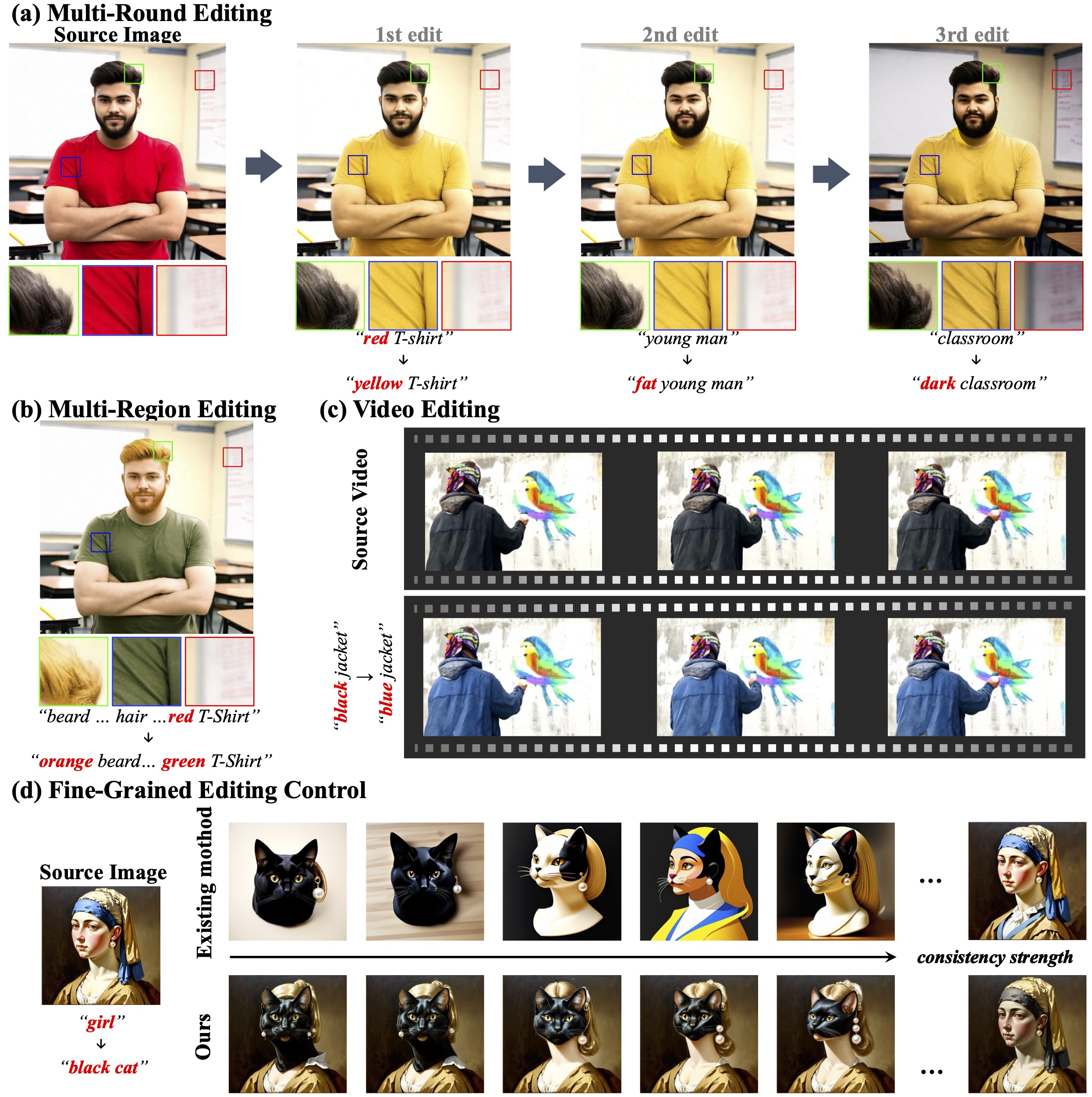

My research focuses on image and video generation, visual editing, and talking head synthesis.

From May 2026, I will join Meta as a research intern, working on video generation

with Lu Yuan.

Previously, from May 2025 to April 2026, I interned at IDEA and

StepFun, collaborating with Lei Zhang

and Gang Yu, and co-authored nine papers on image/video generation and editing.

Concurrently, I led R&D teams working on video generation and agents at Xiaobing.ai.

From April 2023 to April 2025, I co-founded Morph Studio, a video generation startup with over 1.5 million users.

Earlier, starting in 2022, I worked as a research intern at

Xiaobing.ai, collaborating with

Baoyuan Wang

and

Duomin Wang.

From August 2019 to May 2021, I worked with

Carlo H. Séquin

at UC Berkeley on the graphics project

JIPCAD.

I received my B.S. from the Department of Computer Science and Technology

(Honors Science Program) and completed the Honors Youth Program (少年班) at

Xi'an Jiaotong University in 2021.